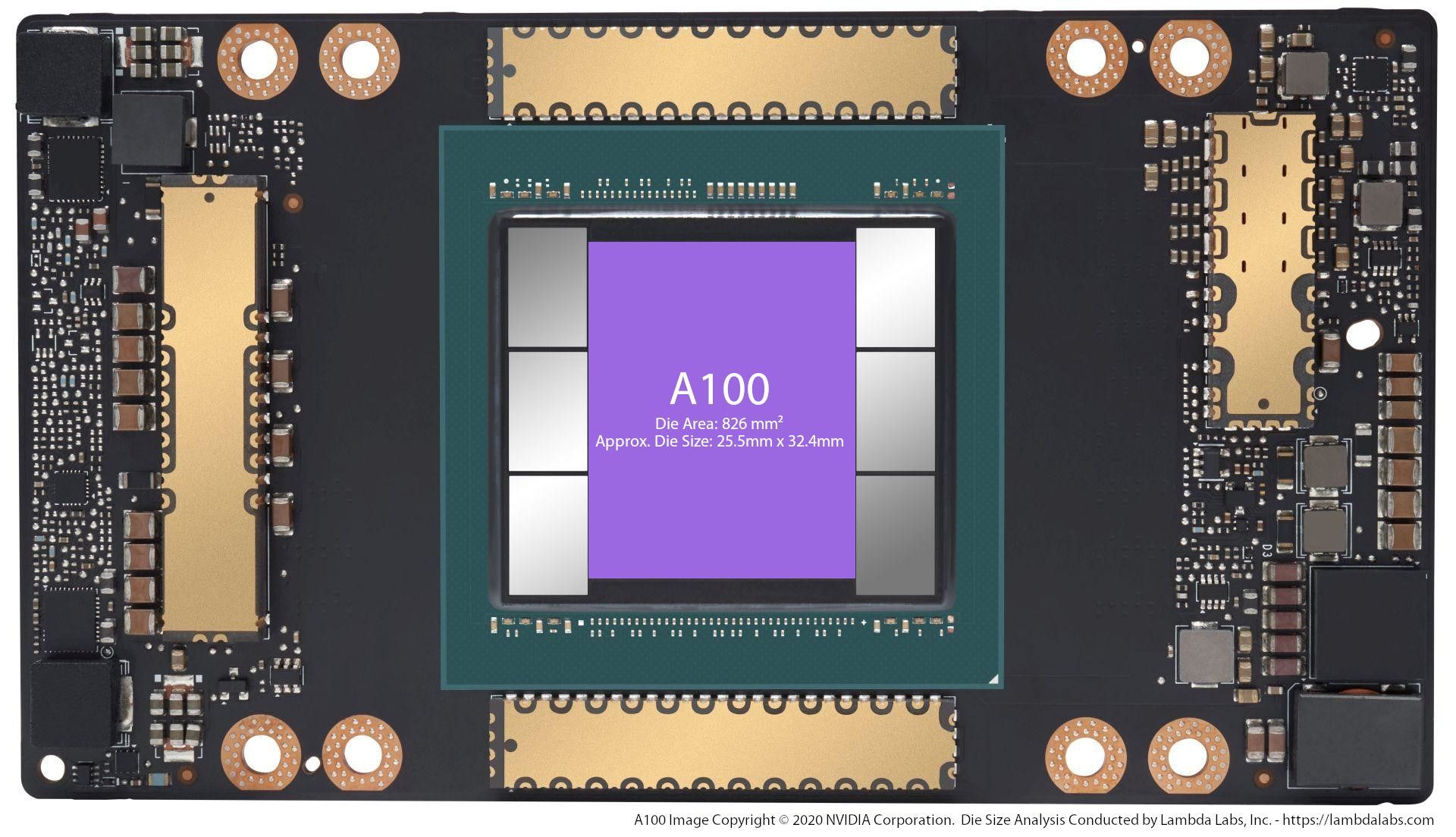

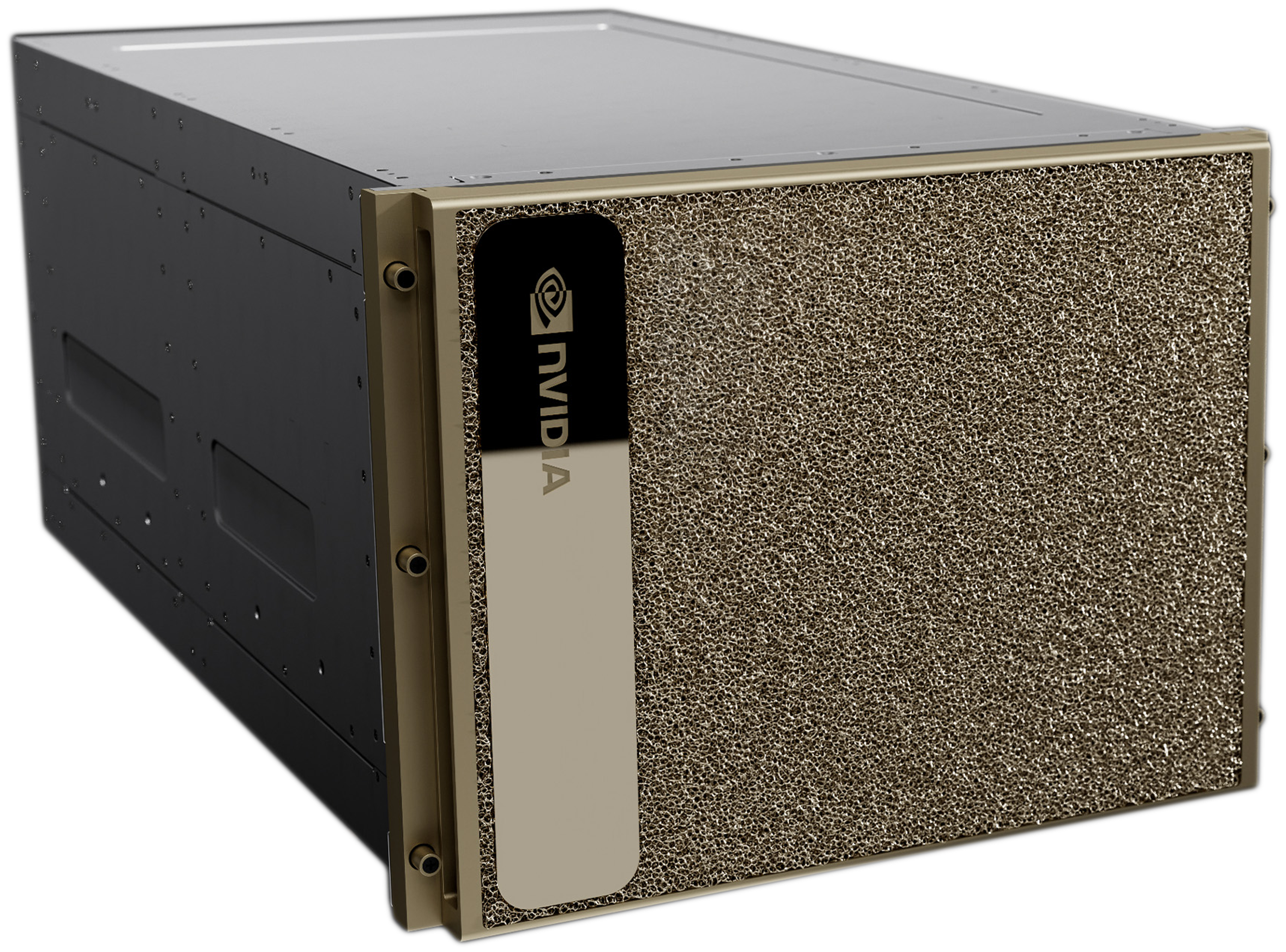

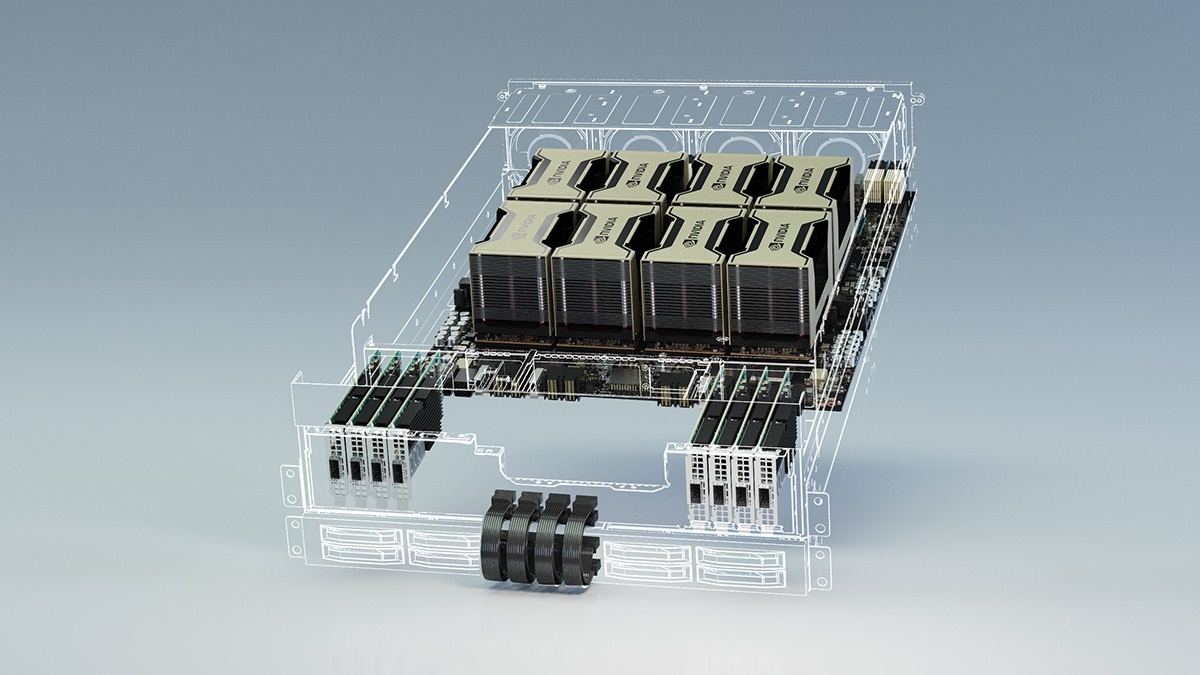

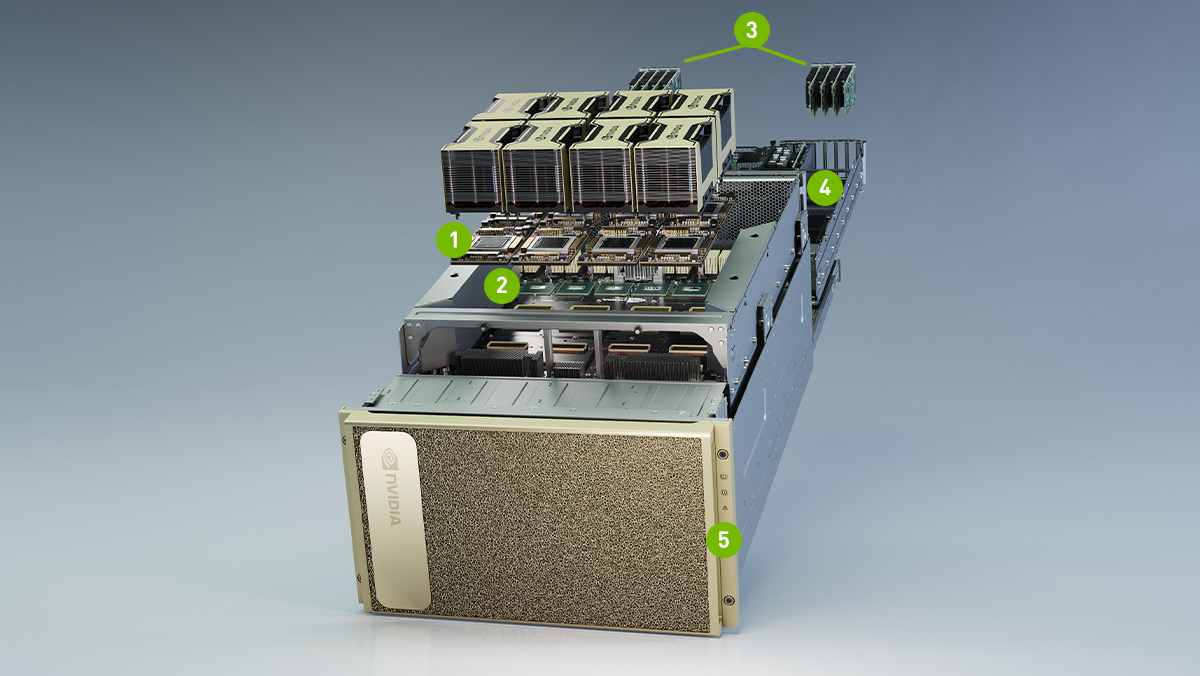

Best Deep Learning NVIDIA GPU Ai Server in 2022 2023 – 8x water-cooled NVIDIA H100, A100, A6000, 6000 Ada, RTX 4090, Quadro RTX 8000 GPUs and dual AMD Epyc processors. In Stock. Customize and buy now

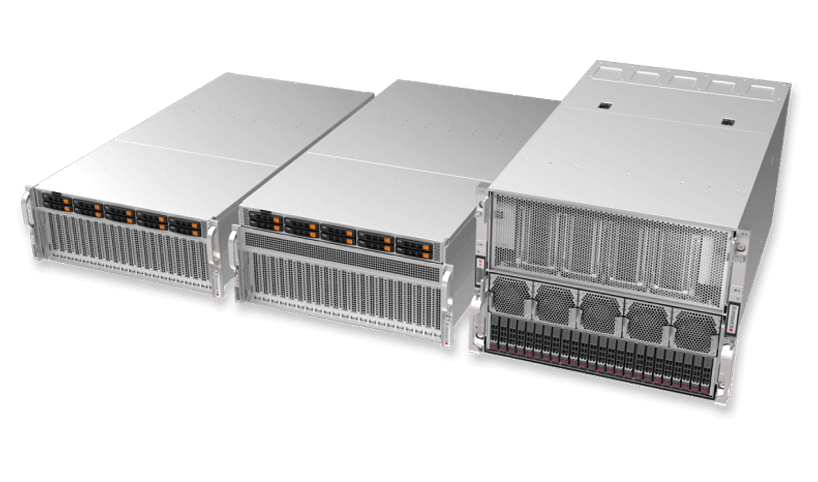

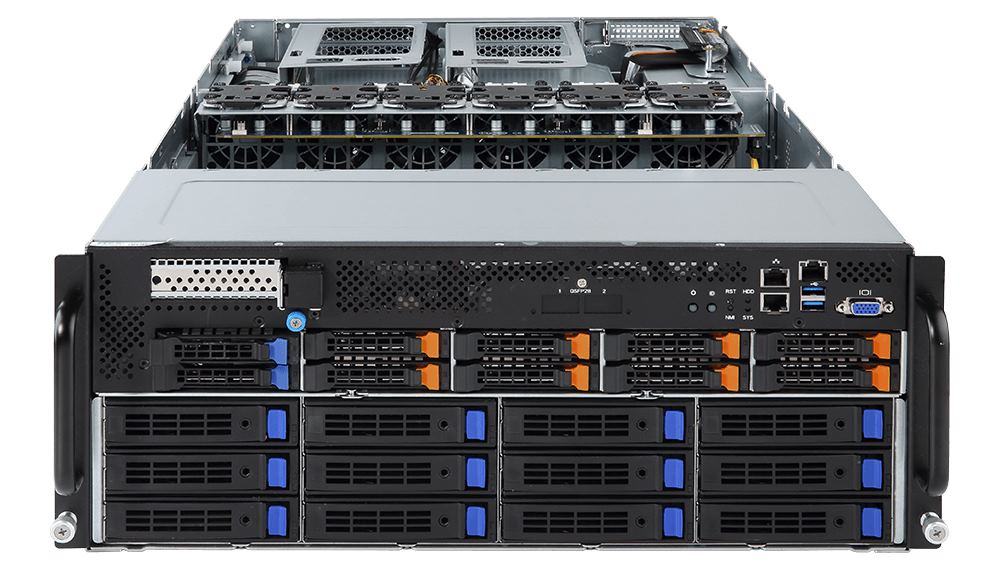

BIZON G7000 G3 – 8 GPU NVIDIA A6000, A5000, A100 Dual Xeon Server for Deep Learning, AI | Best Deep Learning server in 2023

BIZON X8000 G2 – AMD EPYC 9004-Series Server – Scientific Research and Deep Learning AI GPU Server – Up to 4 GPU, Up to 96 Cores CPU

How to use NVIDIA GPUs for Machine Learning with the new Data Science PC from Maingear | by Déborah Mesquita | Towards Data Science

%20Pages/deep-learning-server.jpeg)